As reported in my last post, I have decided to discontinue the Learn Linux Now project and site. I found that I just didn’t have the time to keep producing content.

New site, new videos

Even though I don’t post here that often any longer I thought it prudent to post about a new adventure I am undertaking. I started a new video series on YouTube and am posting it to a new site called Learn Linux Now. You can view the first post and video on my Introduction of why I am starting this series.

Scan your network and alert of new unknown clients

I’ve been looking for a way to scan my home network and alert me via email when a new device, by MAC address, is seen on my network. At one point I tried completing this with a shell script on my server using a flatfile database of known MAC addresses but either 1.) my shell-script-fu wasn’t strong enough to get the job done or 2.) I needed something more powerful, like python. Enter python.

Working at NASA I am surrounded by some incredibly intelligent people. So I decided to ask one of my co-workers to assist me with writing a python script. This is what we came up with. You can find his original script here: https://github.com/AdamFSU/Scripts

Here is what I worked out.

I have a CentOS 7 server running a postfix smtp relay. Here is the configuration for postfix I took from a RHCE 7 class I took on Linux Academy:

- Install necessary packages

yum install postfix cyrus-sasl cyrus-sasl-plain –y

- Edit the config: vim /etc/postfix/main.cf

relayhost = [smtp.mailserver.com]

example: relayhost = [smtp.gmail.com]:587

smtp_use_tls = yes

smtp_sasl_auth_enable = yes

smtp_sasl_password_maps = hash:/etc/postfix/sasl_passwd

smtp_tls_CAfile = /etc/ssl/certs/ca-bundle.crt

smtp_sasl_security_options = noanonymous

smtp_sasl_tls_security_options = noanonymous

inet_protocols = ipv4

inet_interfaces = loopback-only

mynetworks = 127.0.0.0/8

myorigin = $myhostname

mydestination =

local_transport = error: local delivery disabled

create a sasl password file specifically for Gmail:

cd /etc/postfix

vim sasl_passwd

[smtp.gmail.com]:587 username@gmail.com:password

Finish configuration of sasl password:

postmap sasl_passwd

chown root:postfix sasl_passwd

chmod 640 sasl_passwd

postmap sasl_passwd

- Enable and start necessary services

systemctl enable postfix && systemctl start postfix

Now, here are the additional requirements for the script:

Prerequisites:

- Required Python packages: python-nmap – To install run: python3 -m pip install python-nmap

- The $PYTHONPATH environment variable must be set: example: export PYTHONPATH=/usr/lib/python3/dist-packages/ For CentOS added to root’s .bashrc: export PYTHONPATH=/usr/local/lib/python3.6/site-packages/

- This script also uses the mail utility for sending email alerts – For CentOS: install mailx

yum install mailx -y - Flatfile called master_mac.txt with listing of known MAC addresses on the LAN

- The nmap utility

yum install nmap -y

Here is the python script:

#!/usr/bin/python3

# *** NOTE: THIS SCRIPT MUST BE RUN AS ROOT ***

# python module imports

import os

import shutil

import nmap

import sys

import subprocess

from datetime import datetime

# variable containing the filepath of the nmap scan results file

mac_list = os.environ['HOME'] + "/maclist.txt"

# variable containing the filepath of the approved mac addresses on the LAN file

masterfile = os.environ['HOME'] + "/master_mac.txt"

# If a file from previous nmap scans exists, create a backup of the file

if os.path.exists(mac_list):

shutil.copyfile(mac_list, os.environ['HOME'] + '/maclist_' + datetime.now().strftime("%Y_%m_%H:%M") + '.log.bk')

# Open a new file for the new nmap scan results

f = open(mac_list, "w+")

# Print to console this warning

print("Don't forget to update your network in the nmap scan of this script")

# This block of code will scan the network and extract the IP and MAC address

# Create port scanner object, nm

nm = nmap.PortScanner()

# Perform: nmap -oX - -n -sn 192.168.0.1/24

# *** NOTE: -sP has changed to -sn for newer versions of nmap! ***

# Change this to your network IP range

nm.scan(hosts='192.168.0.1/24', arguments='-n -sn')

# Retrieve results for all hosts found

nm.all_hosts()

# For every host result, write their mac address and ip to the $mac_list file

for host in nm.all_hosts():

# If 'mac' expression is found in results write results to file

if 'mac' in nm[host]['addresses']:

f.write(str(nm[host]['addresses']) + "\n")

# If 'mac' expression isn't found in results print warning

elif nm[host]['status']['reason'] != "localhost-response":

print("MAC addresses not found in results, make sure you run this script as root!")

# Close file for editing

f.close()

# Open file for reading

f = open(mac_list, "r")

# Read each line of file and store it in a list

mac_addresses = f.read().splitlines()

# Close file for reading

f.close()

# Open masterfile for reading

if os.path.isfile(masterfile):

f2 = open(masterfile, "r")

else:

print("Could not find master_mac file! Verify file path is correct!")

sys.exit()

# Read each line of file and store it in a list

master_mac_addresses = f2.read().splitlines()

# Close file for reading

f2.close()

# Create empty list of new devices found on network

new_devices = []

# For every list entry in the mac_addresses list

for i in mac_addresses:

# Convert the list index from a string to a dictionary so we can parse out mac address for comparison

dic = eval(i)

# Compare mac address portion of dictionary to mac addresses in the master_mac_addresses file

# If the scanned mac address is not in the master_mac_addresses file

if dic['mac'] not in master_mac_addresses:

# Add scanned mac address to new devices list

new_devices.append(dic)

# If the new_devices list isn't empty

if len(new_devices) != 0:

# output a warning to the console

warning = "\nWARNING!! NEW DEVICE(S) ON THE LAN!! - UNKNOWN MAC ADDRESS(ES): " + str(new_devices) + "\n"

print(warning)

# Create email notification of the warning

try:

# subject of email

subject = "WARNING, new device on LAN!"

# content of email

content = "New unknown device(s) on the LAN: " + str(new_devices)

# shell process of sending email with mutt

m1 = subprocess.Popen('echo "{content}" | mail -s "{subject}" youremail@gmail.com'.format(

content=content, subject=subject), shell=True)

# output whether email was successful in sending or not

print(m1.communicate())

# if sending of the email fails, this will output why

except OSError as e:

print("Error sending email: {0}".format(e))

except subprocess.SubprocessError as se:

print("Error sending email: {0}".format(se))

Next is to make sure your Gmail is setup correctly by making sure to enable less secure app access in Google account security. Then setup a crontab under root that looks something like this:

#scan the network every 10 minutes

*/10 * * * * /root/bin/nmapscan_wo_log.py > /dev/null 2>&1

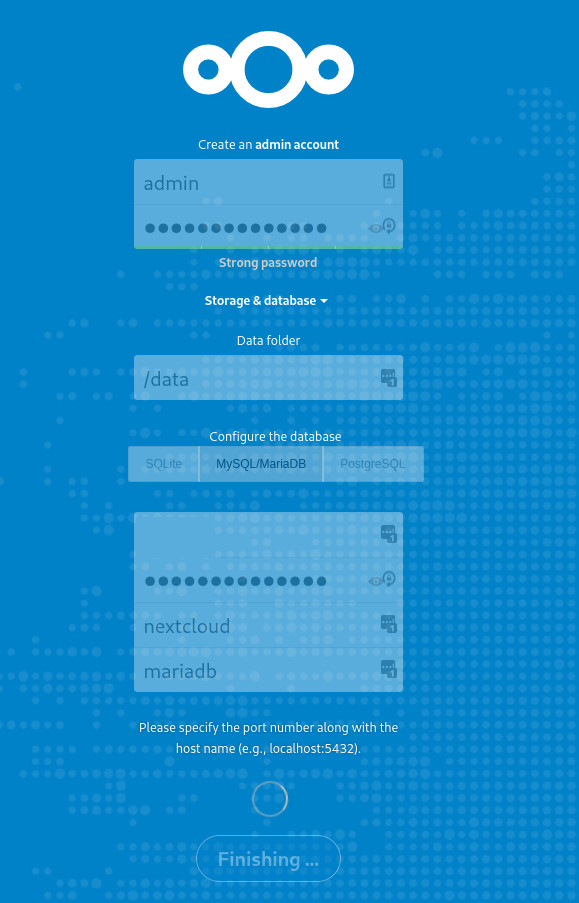

Nextcloud deployment via Docker

It’s been a long time since posting but I thought I would document my deployment for others, and my future self, in case the same issues are discovered.

I recently decided to replace my Ubuntu server instance running Nextcloud installed via snap on DigitalOcean with a CentOS 7 (my personal server preference) instance deployed via Docker container. In my search for containers I found the guys over at LinuxServer.io have containers on Docker Hub. After joining their Discord community I was directed by one of the community team members the site blog post on deploying LetsEncrypt, MariaDB, and Nextcloud (with reverse proxy) all in one stroke. That blog post can be found here: Let’s Encrypt, Nginx & Reverse Proxy Starter Guide – 2019 Edition

Being a true noob at containers (I’ve taken classes but am still in the learning stage), I read the post and composed the docker compose file based on that article. That file is below. But, reader, if you have never setup Docker before, here are the steps I completed on CentOS 7 all before getting started on deploying the containers.

First, install and setup Docker:

1. Install pre-req

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

2. Add the repo

sudo yum-config-manager –add-repo https://download.docker.com/linux/centos/docker-ce.repo

3. Install the community edition

sudo yum install -y docker-ce docker-ce-cli containerd.io

4. Start and enable the service

sudo systemctl start docker && sudo systemctl enable docker

5. Add user to the ‘docker’ group

sudo usermod -aG docker $(whoami)

6. Test the config

docker run hello-world

Second, install Docker compose:

*Note: the latest version and instructions can be found on Docker’s site here

1. Download the latest version via curl

sudo curl -L “https://github.com/docker/compose/releases/download/1.25.0/docker-compose-$(uname -s)-$(uname -m)” -o /usr/local/bin/docker-compose

2. Change executible permissions

sudo chmod +x /usr/local/bin/docker-compose

3. Check the version

docker-compose –version

Now, the next step for your cloud VPS, if you are deploying to the cloud, is to make sure your public domain’s DNS pointing to your VPS is setup correctly. DigitalOcean has great documentation on this process.

Now, after all is configured on your server, compose your Docker compose file and deploy. Below is a sample from my file. If you will note that I have volumes mounted. So that I had enough space for my files synchronization I also pay for block storage on DigitalOcean that is mounted to my VPS. Also, during the deployment, I had to generate an API token key on DigitalOcean. If you view your LetsEncrypt docker log during the deployment (see LinuxServer.io blog for reference) you will see it gripe about not having the proper credentials in the /config/dns-conf/digitalocean.ini file.

Docker compose

---

version: "3"

services:

nextcloud:

image: linuxserver/nextcloud

container_name: nextcloud

environment:

- PUID=1001

- PGID=1001

- TZ=America/New_York

volumes:

- /mnt/myncvolume/nextcloud/config:/config

- /mnt/myncvolume/nextcloud/data:/data

depends_on:

- mariadb

restart: unless-stopped

mariadb:

image: linuxserver/mariadb

container_name: mariadb

environment:

- PUID=1001

- PGID=1001

- MYSQL_ROOT_PASSWORD=myrootpassword

- TZ=America/New_York

- MYSQL_DATABASE=nextcloud

- MYSQL_USER=myusername

- MYSQL_PASSWORD=mypassword

volumes:

- /mnt/myncvolume/mariadb/config:/config

restart: unless-stopped

letsencrypt:

image: linuxserver/letsencrypt

container_name: letsencrypt

cap_add:

- NET_ADMIN

environment:

- PUID=1001

- PGID=1001

- TZ=America/New_York

- URL=myurl.org

- SUBDOMAINS=wildcard

- VALIDATION=dns

- DNSPLUGIN=digitalocean

- EMAIL=myemail@myemaildomain.com

volumes:

- /mnt/myncvolume/letsencrypt/config:/config

ports:

- 443:443

- 80:80

restart: unless-stoppedThe above compose file was not my original file as I ran into scenarios where it didn’t seem to work in my original compose file during the initial Nextcloud configuration in the wizard, specifically at the section where you create an admin. The below screenshot is from when it finally worked. The problem I kept running into is that I was entering my actual name in the admin field and the root user in the bottom section thinking I needed to use the database root user to create the admin account. Using the docker compose sample above as a reference, you place the MYSQL_USER that the container creates for you in the bottom section. And all is well.

A Little Closer to a Launch

Video of SpaceX Falcon 9 on the 15th. I had to leave work to get home at a decent time that day so I couldn’t stay on Center during the launch. Luckily I was able to capture it from the NASA Causeway on the way out.

Toddle Safety Life Hack – Door Pinch Guard

My wife heard about this life hack online so I thought I would try it out on an old pool noodle we had lying around.

1. Grab a cheap noodle from your local dollar store. The one we had was about 3 1/2 inches wide.

2.Cut it about the length of your hand.

3.Cut it about 3/4 inch.

4.Place toward the top of the door. It works pretty well and snug to prevent our toddler from jamming her hand in the door. She’s currently at that stage of wanting to close doors all the time.

Hurricane Irma

So, Hurricane Irma came right over us here in southeast Orlando early Monday morning. Being a native Floridian and having experienced several hurricanes in my lifetime, this one was by far the scariest. Below are some images of the experiences of waiting in line at Home Depot (almost 2 hours) and Lowe’s (3 hours) for plywood and visiting the local supermarket just a couple of days before the storm was to arrive and seeing empty shelves (we had actually stocked up on supplies earlier in the week). A couple of days before finding plywood at Lowe’s we had bought some lumber from Home Depot since they had no plywood and it took the most abuse from the storm on the side of the house. Thank God, through it all, despite almost 100 mph winds, we never lost power and only a few shingles on the top of the house.

We got most of the windows vulnerable to the most severe wind boarded up. Not pictured is our back sliding glass door. We did not have enough from Lowe’s to board it up but thankfully to a friend I was able to get another sheet from him to board the sliding glass door.

Here is some pictures of the aftermath.

We drove around on Monday after the 6pm curfew was lifted and saw plenty of trees broken or uprooted. This McDonald’s intercom is what surprised us the most, especially since that metal base was bent without remorse by Irma.

Goodbye, The Great Movie Ride

Today is a very sad day for me and many other The Great Movie Ride Cast Members, current and past. Today is the last day for The Ride. Disney will be closing its doors. The Magic of The Ride will be silenced. Am I being too dramatic? Maybe. But unless you have worked there and know what it was like to experience the friendships and memories of having worked at The Ride it will be difficult for you to truly understand the emotions we are going through. Sure, you may be a fan of The Ride and are saddened that it is closing. I was a fan too before I worked there and am still a fan. I love that ride. Of all the rides at Walt Disney World, it is my all-time favorite. Always has been my favorite. It probably comes from my love for movies. Disney created something that enhanced that movie magic I love to experience. Of the entire ride, the Finale was my favorite. Sitting there watching all those films clips always put a smile on my face even though I had viewed it, literally, hundreds of times while working there. And viewed it so many times before and after working there. It has never gotten old.

Below is a video of one of my Gangster days at The Great Movie Ride in 1999 when the park was called Disney’s MGM Studios. It was a good time. It was a fun time. It was a time in my life that holds a lot of wonderful memories. Those memories include not just of The Ride but memories of times outside The Ride spent with my fellow Cast Members. Times hanging out at The Atlantic Dance for some swing dancing, the Ale House, the ESPN Club, and at Super Bowl Parties to watch football. Thank you, The Great Movie Ride, and all of you that were there for those beautiful memories. It’s the stuff dreams are made of.

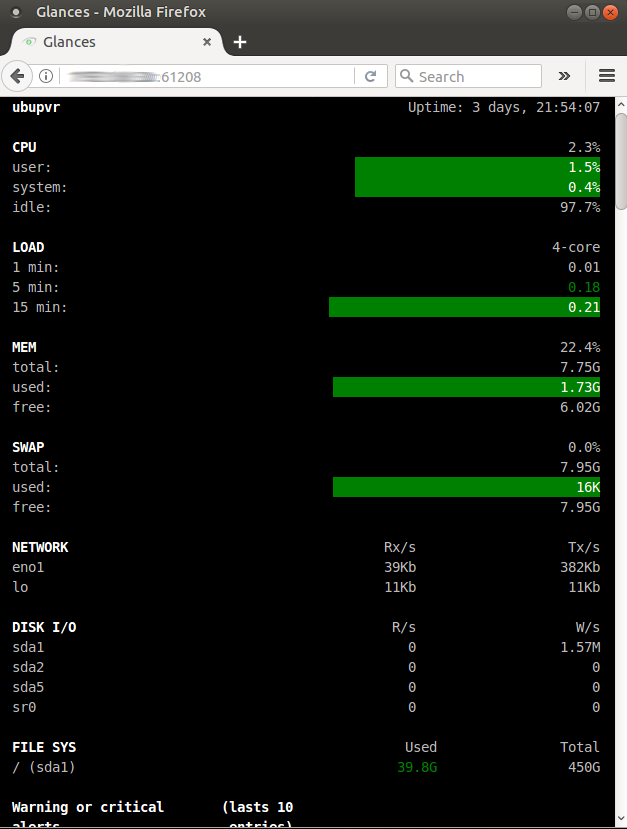

Glances – System Monitoring

Since I have only one (Ubuntu) server at home currently running as a PVR (personal video recorder) for recording TV shows from a OTA (over-the-air) antenna and Plex for viewing my content via the Roku (more on all that on a later post), I like to see what my server is doing as far as performance at a glance from time to time. Sure, top or htop will do the job but I want more. I get more from using Glances. You can get more information on the utility here.

I setup my server to run the web UI and launch at start. I created this script to easily deploy to any machine running Ubuntu. I included in the script to also create a systemd service so that it would start after each reboot. Here’s the script:

#!/bin/bash # This script will install the Glances monitoring tool and create # a startup service for the Glances web server. See 'glances --help' # for details # Update and install sudo apt update && sudo apt install -y glances sleep 3 # Change the startup switch from false to true sudo sed -i 's/false/true/g' /etc/default/glances sleep 3 # Create the systemd service sudo touch /lib/systemd/system/glances.service sudo bash -c 'cat <<EOF > /lib/systemd/system/glances.service [Unit] Description = Glances web server After = network.target [Service] ExecStart = /usr/bin/glances -w [Install] WantedBy=multi-user.target EOF' sleep 2 # Reload systemd sudo systemctl daemon-reload sleep 2 sudo systemctl enable glances.service sleep 2 sudo systemctl restart glances.service sleep 2 sudo systemctl status glances.service sleep 2 hostip=`hostname -I` echo echo "Monitoring of this server will be viewable at http://$hostip:61208" echo

I have a third monitor above my other monitors that has a Raspberry Pi 3 connected where I have my Glances page running.

Here is a closer look at the web UI.

It’s been very convenient to view during recordings so that 1.) I know when there is a recording and conversion of the video file going on by seeing a spike in CPU and memory and 2.) to see how well my machine performs during those recordings and conversions.

Shell Scripting: Updates – Part 2

Where I work we don’t have anything like Red Hat Satellite to deploy updates to our thirteen Red Hat servers. So that I don’t have to touch each server manually to apply updates in a relatively controlled fashion, I wrote some shell scripts that will connect to each server, apply updates and then ask if you want to reboot. Again, I don’t normally reboot unless there is a kernel update.

This is the script I use to update servers in our Dev/Test and non-web Production servers:

#!/bin/bash clear # SSH to the server and run local yum update echo "**** Connecting to server1 to update ****" echo ssh -t user@server1 sudo yum update -y 2>&1 | tee -a $HOME/Documents/yumlogs/Dev_Test/updates_server1_`date +%Y%m%d`.log echo echo "**** Finished updating server1 ****" echo read -p "Press [Enter] to continue" clear echo "----------------------------------------------------" echo # SSH to the server and run local yum update echo "**** Connecting to server2 to update ****" echo ssh -t user@server2 sudo yum update -y 2>&1 | tee -a $HOME/Documents/yumlogs/Dev_Test/updates_server2_`date +%Y%m%d`.log echo echo "**** Finished updating server2 ****" echo read -p "Press [Enter] to continue" clear echo "----------------------------------------------------" echo # **************** REBOOTS SECTION ******************* echo "^^^ Do you want to reboot server1? (yes/no) ^^^" read REPLY if [ "$REPLY" == "yes" ]; then echo echo "*** WARNING: You have selected to reboot server1 ***" sleep 3 # SSH to the server and run shutdown ssh -t user@server1 sudo shutdown -r +1 Rebooting in 1 minute sleep 30 elif [ "$REPLY" == "no" ]; then echo echo "--- server1 will not reboot ---" sleep 2 else echo echo "invalid answer, type yes or no"; fi sleep 2 echo echo "^^^ Do you want to reboot server2? (yes/no) ^^^" read REPLY if [ "$REPLY" == "yes" ]; then echo echo "*** WARNING: You have selected to reboot server2 ***" sleep 2 # SSH to the server and run shutdown ssh -t user@server2 sudo shutdown -r +1 Rebooting in 1 minute sleep 30 elif [ "$REPLY" == "no" ]; then echo echo "--- server2 will not reboot ---" sleep 2 else echo echo "invalid answer, type yes or no"; fi sleep 2 echo

Then I use this script to run on my web servers that are in load balancers making sure to complete updates in a controlled fashion.

#!/bin/bash # **************** THIS SCRIPT IS FOR UPDATING REMOTE SERVERS ON LOAD BALANCERS ******************* clear echo echo echo -e " ---->> \033[33;7mREMEMBER TO COMPLETE A SNAPSHOT OF THE SERVERS BEFORE PROCEEDING\033[0m <<----" echo sleep 5 read -p "Press [ENTER] to continue " echo echo "**** Please remove WEB1 from load balance rotation ****" echo sleep 5 read -p "Once the WEB1 has been removed from rotation press [Enter] to continue to complete updates " clear echo # **************** UPDATES SECTION FOR WEB1 ******************* # SSH to the server and run local yum update echo "**** Connection to WEB1 to update ****" echo ssh -t username@WEB1 sudo yum update -y 2>&1 | tee -a $HOME/updates_web1_`date +%Y%m%d`.log echo echo "**** Finished updating WEB1 ****" clear echo # **************** REBOOTS SECTION FOR WEB1 ******************* echo "^^^ Do you want to reboot WEB1? (yes/no) ^^^" read REPLY if [ "$REPLY" == "yes" ]; then echo echo "*** WARNING: You have selected to reboot WEB1 ***" sleep 3 echo # SSH to the server and run shutdown ssh -t username@WEB1 sudo shutdown -r +1 Rebooting in 1 minute sleep 30 elif [ "$REPLY" == "no" ]; then echo echo "--- WEB1 will not reboot ---" sleep 2 else echo echo "invalid answer, type yes or no"; fi echo echo "**** Please test WEB1 before moving on to the next phase ****" sleep 5 echo read -p "Once WEB1 is up after a reboot (if rebooted) and has been tested press [Enter] to continue to WEB2" echo # **************** DONE WITH REBOOTS SECTION FOR WEB1 ******************* clear echo "**** Please add WEB1 back into rotation and remove WEB2 from load balance rotation ****" echo sleep 5 read -p "Once WEB2 has been removed from rotation press [Enter] to continue to complete updates " clear echo # **************** UPDATES SECTION FOR WEB2 ******************* # SSH to the server and run local yum update echo "**** Connection to WEB2 to update ****" echo ssh -t username@WEB2 sudo yum update -y 2>&1 | tee -a $HOME/updates_104_`date +%Y%m%d`.log echo echo "**** Finished updating WEB2 ****" clear echo # **************** REBOOTS SECTION FOR WEB2 ******************* echo "^^^ Do you want to reboot WEB2? (yes/no) ^^^" read REPLY if [ "$REPLY" == "yes" ]; then echo echo "*** WARNING: You have selected to reboot WEB2 ***" sleep 3 echo # SSH to the server and run shutdown ssh -t username@WEB2 sudo shutdown -r +1 Rebooting in 1 minute sleep 30 elif [ "$REPLY" == "no" ]; then echo echo "--- WEB2 will not reboot ---" sleep 2 else echo echo "invalid answer, type yes or no"; fi echo echo "**** Please test the WEB2 before moving on to the next phase ****" sleep 5 echo read -p "Once WEB2 is up after a reboot (if rebooted) and has been tested, add WEB2 back into rotation and press [Enter] to continue " echo # **************** DONE WITH REBOOTS SECTION FOR WEB2 ******************* clear